Professional supplier of AI and robot teaching equipment

Professional supplier of AI and robot teaching equipment

Available on backorder

The Wheeled Humanoid Robot is an advanced embodied AI platform designed for education, research, and real-world applications. It integrates a mobile omnidirectional base, dual seven-degree-of-freedom bionic arms, multimodal perception modules including vision, speech, and tactile sensing, and an onboard high-performance computing unit capable of running large language models such as DeepSeek and Qwen. The robot enables autonomous navigation through SLAM-based mapping, precise manipulation with coordinated dual-arm control, and natural human-robot interaction powered by multimodal AI reasoning. With an open architecture and support for secondary development, it allows researchers and developers to explore robotics, reinforcement learning, and embodied intelligence algorithms. Its perception-to-decision-to-action loop ensures adaptive behavior in dynamic environments. The system is suitable for a wide range of scenarios including education, scientific research, retail services, logistics operations, intelligent manufacturing, and home assistance, providing a flexible and scalable platform for next-generation intelligent robotics applications in modern industrial and service ecosystems globally.

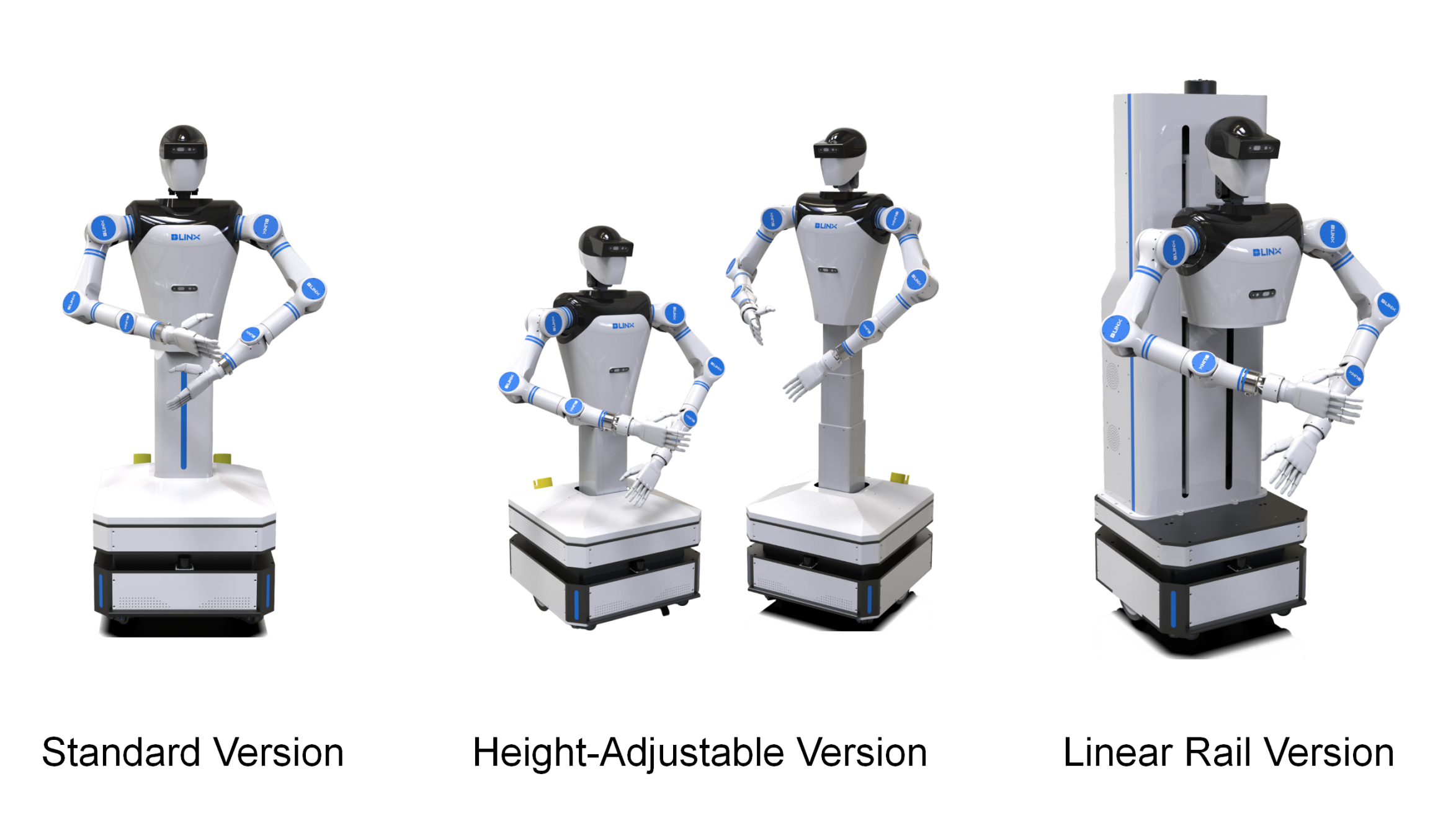

The product is available in three versions: Standard Version, Height-Adjustable Version, and Rail-Mounted Version, as described below:

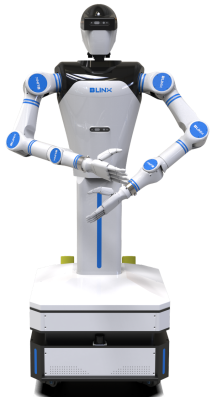

1.Standard Version

The robot features a fixed height of approximately 1600 mm with no lifting mechanism. It is suitable for tasks in standard-height environments such as desks and fixed workstations.

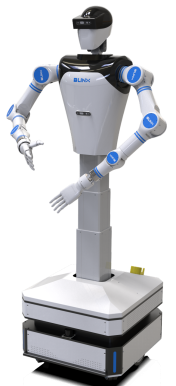

2.Height-Adjustable Version

The upper body is mounted on a liftable column with a travel range of approximately 400 mm, enabling an adjustable height from 1473 mm to 1873 mm. It is suitable for multi-level shelves and workstations with varying heights.

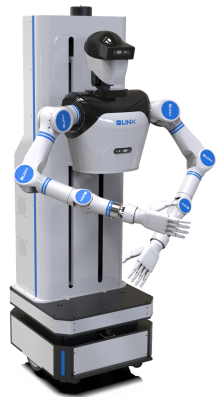

3.Linear Rail Version

The upper body is mounted on a linear rail with a travel range of up to 800 mm (compared to 400 mm for the lifting version). The dual arms can reach down to the floor, making it suitable for large vertical range scenarios such as floor-level object handling, cleaning, and transferring items to tables or shelves.

The robot integrates vision, speech, and sensor technologies to establish a closed-loop system of “perception–decision–execution,” forming a multimodal environmental awareness network. By deploying large language models, it can understand complex user instructions, infer true intent, and generate corresponding decisions and responses.

For example, in a smart manufacturing scenario, when receiving a voice command such as “move the screwdriver on the table to the blue box on the shelf,” the system performs structured reasoning: identifying the target object (screwdriver), source location (table), destination (blue box), and spatial coordinates (shelf). With the assistance of the vision system, it autonomously generates step-by-step task execution plans and completes the operation accurately. During execution, the system continuously fuses visual, tactile, and positional data to optimize performance in real time.

The dual-arm system adopts self-developed 7-DOF humanoid robotic arms with fully open-source low-level control, supporting secondary development. It offers a wide range of motion and high precision, enabling natural human-like arm movements.

A one-controller-for-two-arms architecture is used to achieve coordinated control and collision avoidance between the two arms. For example, in a garment folding task, the system can quickly generate optimal motion planning while preventing arm interference. Equipped with electronic skin, the system continuously perceives environmental changes and, combined with large model decision-making capabilities, dynamically adjusts motion paths and posture for more precise manipulation.

The humanoid robot’s dual-arm system is equipped with capacitive electronic skin made of high-sensitivity flexible sensing materials covering the arm surface. It can detect object proximity within a range of 5–15 cm, enabling centimeter-level spatial awareness and obstacle avoidance.

In human–robot collaboration scenarios, a dual-layer safety mechanism (pre-contact warning + emergency stop upon contact) significantly enhances operational safety.

Through a hierarchical reinforcement learning framework and cross-scenario transfer learning mechanisms, the robot gains continuous adaptability to complex environments.

For instance, when executing a task such as “bring me a glass of water,” reinforcement learning can be used to train the robot by decomposing the task into sub-tasks: object recognition (locating the cup), navigation (moving to the target location), and grasping (selecting appropriate grasp posture). This task decomposition shortens training cycles.

With continuous interaction and learning from the environment, the robot can dynamically optimize its behavior model, achieving autonomous evolution and rapid adaptation to diverse application scenarios, ultimately forming an embodied intelligence system. The combination of reinforcement learning and self-evolution drives humanoid robots toward general-purpose intelligent agents with fully autonomous decision-making capabilities.

The robot is equipped with a wide range of sensors, including depth cameras, speech recognition modules, LiDAR, ultrasonic ranging sensors, and electronic skin, forming a comprehensive environmental perception system.

In practical applications, the built-in voice recognition module supports audio detection, intelligent speech recognition, and sound source localization. The depth vision systems mounted on the head and torso enable the capture of multimodal interaction data such as speech, gestures, facial expressions, objects, and components.

With the onboard central computing unit, the robot can perform natural language understanding and instruction parsing during human–robot interaction, enabling execution of designated actions and supporting applications in human–robot collaboration and voice-controlled AI systems.